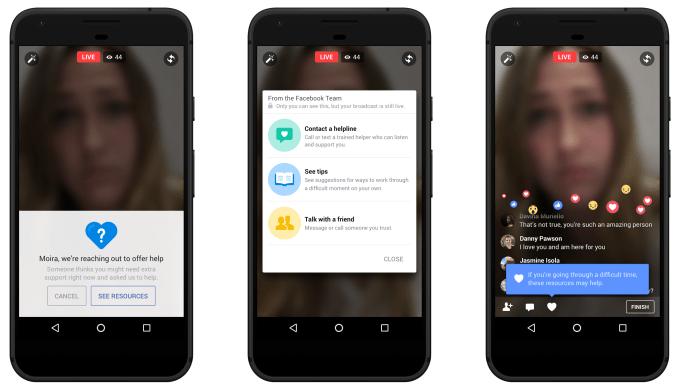

That is software program to save lots of lives. Fb’s new “proactive detection” synthetic intelligence know-how will scan all posts for patterns of suicidal ideas, and when mandatory ship psychological well being assets to the person in danger or their buddies, or contact native first-responders. Through the use of AI to flag worrisome posts to human moderators as an alternative of ready for person reviews, Fb can lower how lengthy it takes to ship assist.

Fb previously examined utilizing AI to detect troubling posts and more prominently surface suicide reporting options to buddies within the U.S. Now Fb is will scour all kinds of content material around the world with this AI, besides within the European Union, the place General Data Protection Regulation privateness legal guidelines on profiling customers based mostly on delicate data complicate using this tech.

Fb additionally will use AI to prioritize notably dangerous or pressing person reviews in order that they’re extra shortly addressed by moderators, and instruments to immediately floor native language assets and first-responder contact information. It’s additionally dedicating extra moderators to suicide prevention, coaching them to take care of the circumstances 24/7, and now has 80 native companions like Save.org, Nationwide Suicide Prevention Lifeline and Forefront from which to offer assets to at-risk customers and their networks.

“That is about shaving off minutes at each single step of the method, particularly in Fb Reside,” says VP of product administration Man Rosen. Over the previous month of testing, Fb has initiated greater than 100 “wellness checks” with first-responders visiting affected customers. “There have been circumstances the place the first-responder has arrived and the particular person continues to be broadcasting.”

The thought of Fb proactively scanning the content material of individuals’s posts may set off some dystopian fears about how else the know-how might be utilized. Fb didn’t have solutions about how it could keep away from scanning for political dissent or petty crime, with Rosen merely saying “we have now a chance to assist right here so we’re going to put money into that.” There are actually huge useful features concerning the know-how, however it’s one other house the place we have now little alternative however to hope Fb doesn’t go too far.

[Replace: Fb’s chief safety officer Alex Stamos responded to those issues with a heartening tweet signaling that Fb does take critically accountable use of AI.

Fb CEO Mark Zuckerberg praised the product replace in a submit at this time, writing that “Sooner or later, AI will be capable to perceive extra of the delicate nuances of language, and can be capable to determine completely different points past suicide as effectively, together with shortly recognizing extra sorts of bullying and hate.”

Sadly, after TechCrunch requested if there was a manner for customers to decide out, of getting their posts a Fb spokesperson responded that customers can’t decide out. They famous that the characteristic is designed to boost person security, and that assist assets supplied by Fb may be shortly dismissed if a person doesn’t wish to see them.]

Fb educated the AI by discovering patterns within the phrases and imagery utilized in posts which were manually reported for suicide threat prior to now. It additionally appears to be like for feedback like “are you OK?” and “Do you want assist?”

“We’ve talked to psychological well being specialists, and probably the greatest methods to assist stop suicide is for individuals in want to listen to from buddies or household that care about them,” Rosen says. “This places Fb in a very distinctive place. We will help join people who find themselves in misery hook up with buddies and to organizations that may assist them.”

How suicide reporting works on Fb now

By way of the mixture of AI, human moderators and crowdsourced reviews, Fb may attempt to stop tragedies like when a father killed himself on Facebook Live final month. Reside broadcasts particularly have the ability to wrongly glorify suicide, therefore the mandatory new precautions, and likewise to have an effect on a big viewers, as everybody sees the content material concurrently in contrast to recorded Fb movies that may be flagged and introduced down earlier than they’re considered by many individuals.

Now, if somebody is expressing ideas of suicide in any sort of Fb submit, Fb’s AI will each proactively detect it and flag it to prevention-trained human moderators, and make reporting choices for viewers extra accessible.

When a report is available in, Fb’s tech can spotlight the a part of the submit or video that matches suicide-risk patterns or that’s receiving involved feedback. That avoids moderators having to skim by means of an entire video themselves. AI prioritizes customers reviews as extra pressing than different kinds of content-policy violations, like depicting violence or nudity. Fb says that these accelerated reviews get escalated to native authorities twice as quick as unaccelerated reviews.

Mark Zuckerberg will get teary-eyed discussing inequality throughout his Harvard commencement speech in Could

Fb’s instruments then carry up native language assets from its companions, together with phone hotlines for suicide prevention and close by authorities. The moderator can then contact the responders and attempt to ship them to the at-risk person’s location, floor the psychological well being assets to the at-risk person themselves or ship them to buddies who can speak to the person. “One in all our objectives is to make sure that our crew can reply worldwide in any language we assist,” says Rosen.

Again in February, Fb CEO Mark Zuckerberg wrote that “There have been terribly tragic occasions — like suicides, some reside streamed — that maybe may have been prevented if somebody had realized what was taking place and reported them sooner . . . Synthetic intelligence will help present a greater strategy.”

With greater than 2 billion customers, it’s good to see Fb stepping up right here. Not solely has Fb created a manner for customers to get in contact with and take care of one another. It’s additionally sadly created an unmediated real-time distribution channel in Fb Reside that may attraction to individuals who need an viewers for violence they inflict on themselves or others.

Making a ubiquitous international communication utility comes with tasks past these of most tech corporations, which Fb appears to be coming to phrases with.

Featured Picture: Three Photos/Getty Photos

!function(f,b,e,v,n,t,s)(window,

document,’script’,’//connect.facebook.net/en_US/fbevents.js’);

fbq(‘init’, ‘1447508128842484’);

fbq(‘track’, ‘PageView’);

fbq(‘track’, ‘ViewContent’, );

window.fbAsyncInit = function() ;

(function(d, s, id)(document, ‘script’, ‘facebook-jssdk’));

function getCookie(name) ; )” + name.replace(/([.$?*

window.onload = function()