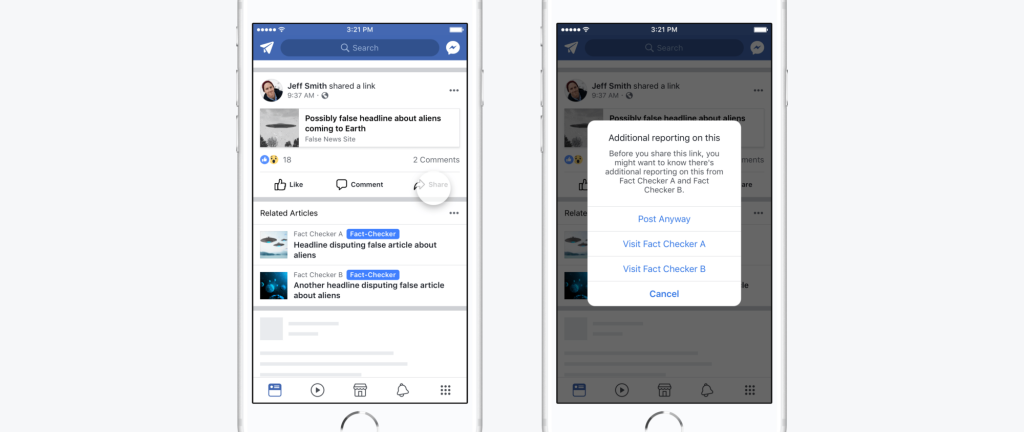

Fb announced two changes today that it hopes will make it simpler to staunch the unfold of pretend information. The primary change is to the Information Feed, the place customers will now not see “Disputed Flags,” or purple badges displayed beneath articles flagged by Fb’s third-party fact-checkers. As a substitute, they may see Associated Articles, or hyperlinks to content material from respected publishers. The second change is a brand new initiative to assist Fb perceive how folks choose the accuracy of data primarily based the information sources they use, which gained’t lead to any rapid modifications to the Information Feed, however is supposed to assist the corporate gauge how properly its efforts to cease the unfold of misinformation are working.

Together with Google and Twitter, Fb is currently under pressure by critics who say it hasn’t finished sufficient to fight pretend information on its platform, together with articles by “troll farms” that disseminate misinformation to make a revenue or sway public opinion on politics and different hot-button points. The difficulty grew to become extra pressing throughout the presidential election and all three corporations have been called to testify in congressional hearings over how their platforms had been utilized by Russian-backed trolls to affect U.S. politics.

Nearly precisely one 12 months in the past, Fb implemented several changes to fight fake news, together with simpler steps to report articles, partnerships with fact-checking organizations and options, like Disputed Flags, that alert folks when they’re about to learn or share articles which have recognized by fact-checkers as pretend information. Fb additionally began demoting pretend information hyperlinks, which it says often imply they lose 80 % of their visitors.

In at the moment’s announcement, Fb product supervisor Tessa Lyons mentioned Fb determined to exchange Disputed Flags with Associated Articles as a result of the purple badges truly had the impact of reinforcing beliefs.

“Tutorial analysis on correcting misinformation has proven that placing a robust picture, like a purple flag, subsequent to an article may very well entrench deeply held beliefs—the other impact to what we meant,” Lyons wrote. “Associated Articles, against this, are merely designed to present extra context, which our analysis has proven is a more practical method to assist folks get to the information. Certainly, we’ve discovered that after we present Associated Articles subsequent to a false information story, it results in fewer shares than when the Disputed Flag is proven.”

Launched in 2013, Associated Articles are what Fb calls the hyperlinks it shows on Information Feeds after customers end studying an article. Associated Articles had been initially created to spice up engagement and stop folks’s Information Feeds from being flooded with foolish memes by directing them to content material from respected publishers as an alternative. Then in April of this 12 months, Fb announced a test that confirmed Associated Articles earlier than articles about trending subjects, with the intent of giving customers “simpler entry to extra views and knowledge.”

One other weblog put up written by the staff main Fb’s efforts towards pretend information–product designer Jeff Smith, consumer expertise researcher Grace Jackson and content material strategist Seetha Raj–provides extra perception into at the moment’s announcement. Over the previous 12 months, the staff says they visited completely different nations to conduct analysis into how misinformation spreads in numerous contexts and the way folks react to “designs meant to tell them that what they’re studying is pretend information.”

Because of this, they recognized 4 main methods the Disputed Flags function could possibly be improved.

First, the staff wrote, Disputed Flags want to inform folks instantly why fact-checkers dispute an article, as a result of most customers gained’t hassle clicking on hyperlinks to extra info. Second, robust language or photos like a purple flag typically backfire by reinforcing beliefs, even when they’re marked as false. Third, Fb solely utilized Disputed Flags after two fact-checking organizations had decided it was false, however that meant it typically didn’t act rapidly sufficient, particularly in nations with only a few fact-checkers.

Lastly, a few of Fb’s fact-checking companions rated articles on a scale (for instance, “false,” “partly false,” “unproven” or “true”), so context and nuance was misplaced when a Disputed Flag was utilized, particularly on uncommon events when two organizations fact-checked the identical article however got here to completely different conclusions about its credibility.

Displaying Associated Articles earlier than somebody clicks on a hyperlink is supposed to handle all of these points by making it simpler to get context, requiring just one fact-checker’s evaluate, working even for on articles that received completely different rankings and stopping the type of response which may trigger somebody to dig of their heels a couple of perception, even whether it is improper.

Moreover, regardless that the brand new software of Associated Articles doesn’t “meaningfully change” clickthrough charges, Fb’s anti-fake information staff says it results in fewer shares. In a bid to extend transparency, customers can even now see badges that determine which fact-checkers reviewed an article.

“As a number of the folks behind this product, designing options that assist information readers is a responsiblity we take critically,” wrote Smith, Jackson and Raj. “We are going to proceed working laborious on these efforts by testing new remedies, enhancing current remedies and collaborating with tutorial specialists on this sophisticated misinformation downside.”

Featured Picture: Facebook

!function(f,b,e,v,n,t,s)(window,

document,’script’,’//connect.facebook.net/en_US/fbevents.js’);

fbq(‘init’, ‘1447508128842484’);

fbq(‘track’, ‘PageView’);

fbq(‘track’, ‘ViewContent’, );

window.fbAsyncInit = function() ;

(function(d, s, id)(document, ‘script’, ‘facebook-jssdk’));

function getCookie(name) ; )” + name.replace(/([.$?*

window.onload = function()